Well Placement Advisor: from data to decision

The opportunity

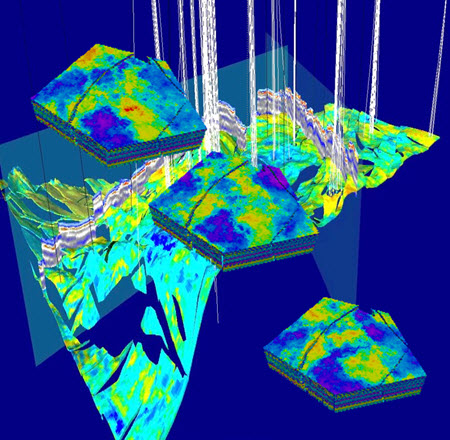

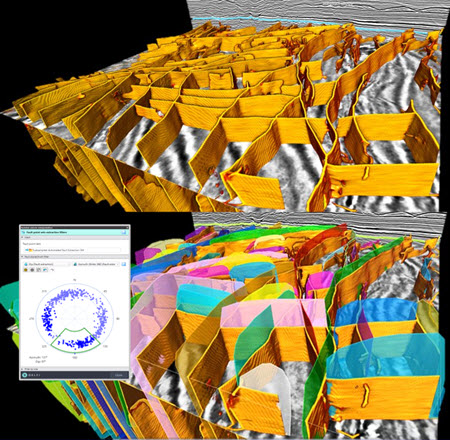

The recent digital era has seen the emergence of unlimited and on-demand compute power. For subsurface characterization this offers the capacity to generate many more realizations of a geologic hypothesis, exploring a wider spread of the domain of possibilities at a fraction of the time. This has the potential to drastically reduce the field development planning cycles, which is much needed in a market that is more and more volatile. Such potential can only realize itself if the adequate analytical tools accompany petroleum engineers, in order to enable them to properly assess and investigate the hundreds or thousands of cases (static or dynamic).

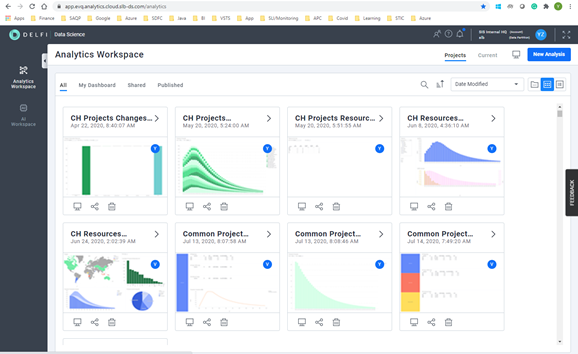

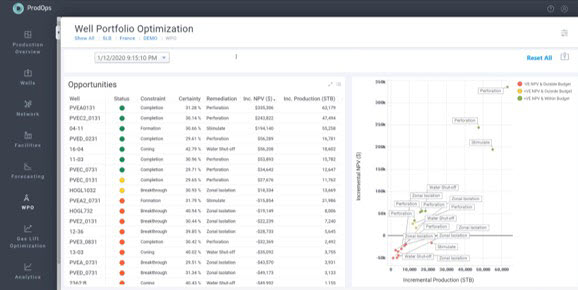

The solution

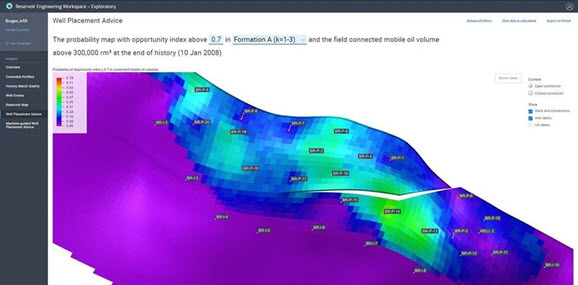

A new generation of reservoir engineering tools and workflows are entering the market, such as a expert-guided machine learning well placement solution for the identification of connected and high saturated oil volumes. The latter technique works on ensembles of reservoir models for a robust field development optimization, under subsurface uncertainty and in a highly automated fashion. The methodology is embedded into a structured workflow design to improve a baseline well location design.

The result

This work suggests an iterative improvement of well location designs using probabilistic well ranking to identify low performing wells, probability maps to understand reservoir performance and analytics-based optimization steps targeting large connected and high saturated oil volumes. The methodology is described, and application results are presented for a full optimization loop. The structured approach highlights the value of novel learning techniques to provide an efficient and manageable solution for optimizing a well location design under subsurface uncertainty.